LongLive: Real-time Interactive Long Video Generation

Authors: S. Yang, W. Huang, R. Chu, Y. Xiao, Y. Zhao, X. Wang, M. Li, E. Xie, Y. Chen, Y. Lu, S. Han, Y. Chen

Status: Submitted to ICLR 2026.

Preprint: arXiv: 2509.22622

Overview

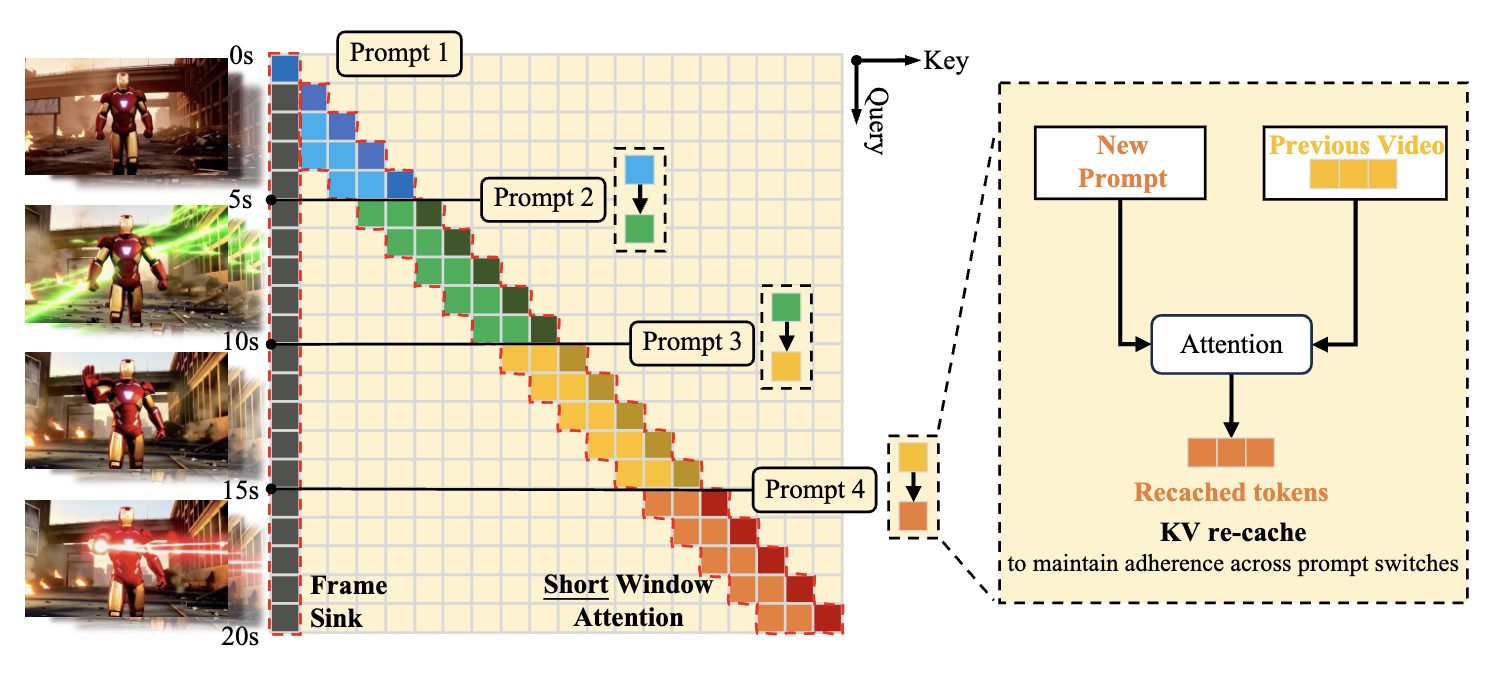

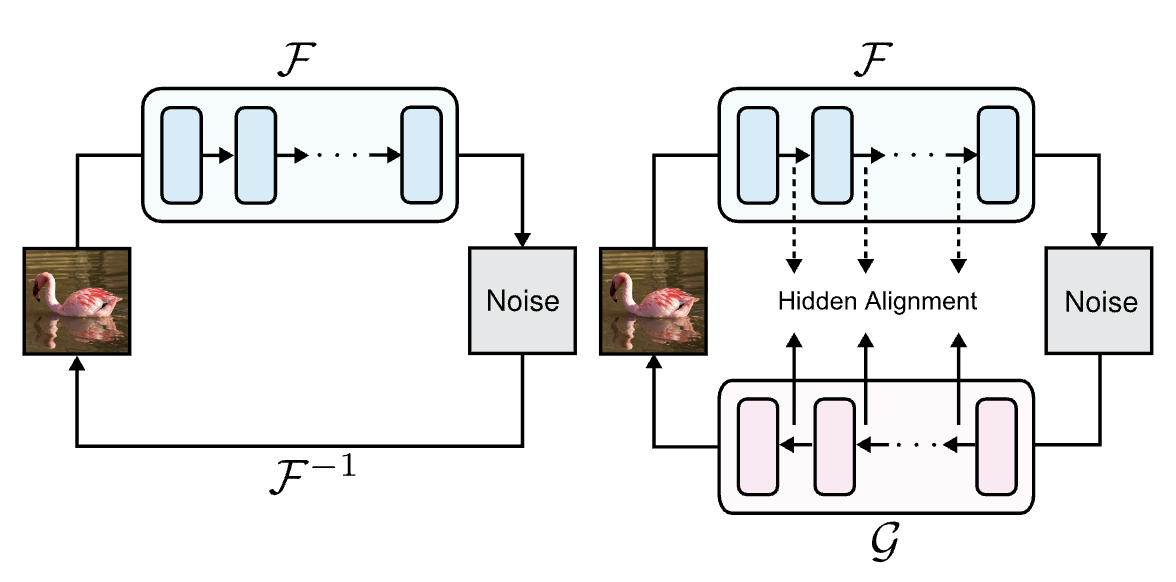

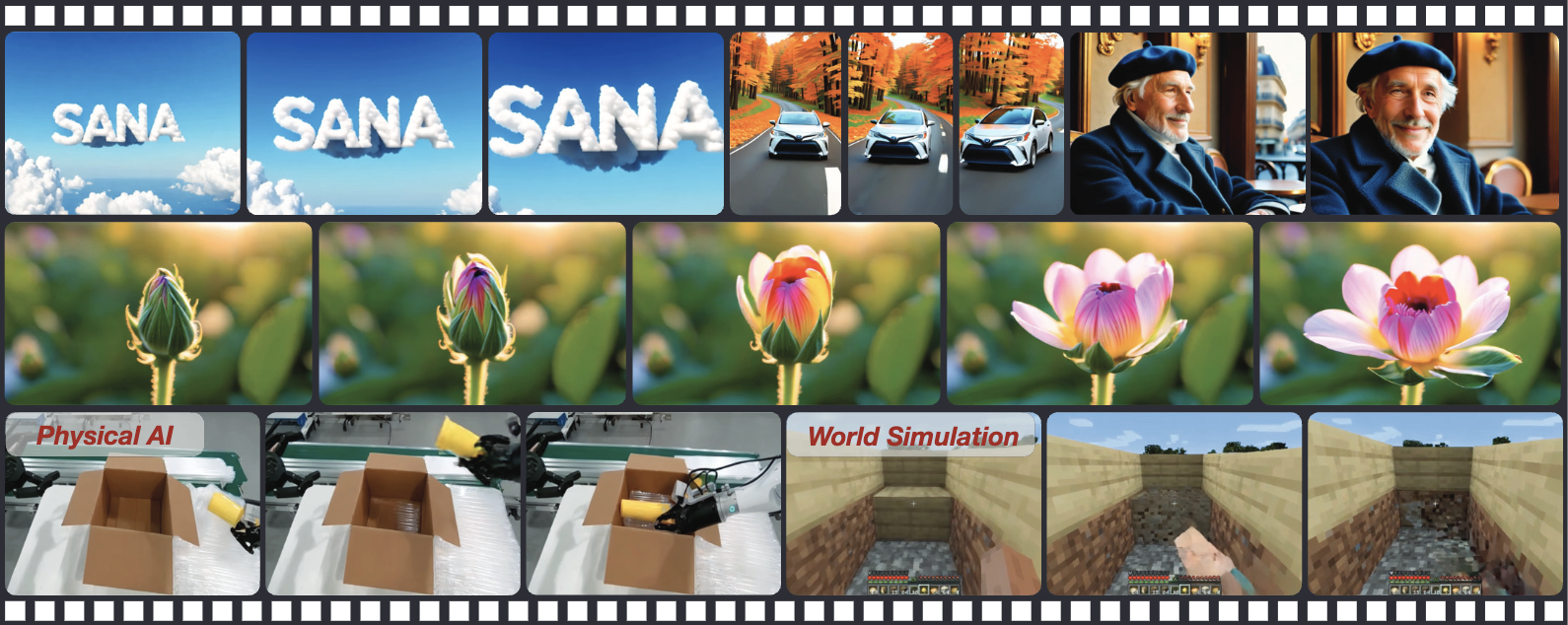

This paper presents a frame-level autoregressive framework designed to enable the real-time generation of long, consistent videos with interactive user control. To address the trade-off between efficiency and long-term coherence, the authors introduce a KV-recache mechanism that seamlessly updates cached states during prompt switches, allowing for smooth narrative transitions without visual discontinuities. This is paired with streaming long tuning—a training strategy that mimics inference by generating extended sequences to mitigate error accumulation—and a short window attention with frame sink mechanism that effectively reduces computational cost while preserving global context. The resulting system fine-tunes a 1.3B-parameter model to generate up to $240$ seconds of video on a single H100 GPU, achieving a real-time inference speed of $20.7$ FPS and outperforming existing baselines in both temporal consistency and interactive responsiveness.